Talking to employees about AI without hype or fear: A practical internal comms guide for leaders

AI is already shaping how work gets done, from drafting content and analysing data to translating messages and supporting decisions. Employees know this. What they often lack is clear, grounded communication about what AI really means for their work.

Often, internal conversations about AI swing between hype and fear. On one side are inflated promises of transformation and productivity. On the other are anxieties about job loss, surveillance and loss of control. Neither extreme helps people to do their best work.

This guide offers practical, evidence‑based guidance on how leaders can talk to employees about AI in a way that is calm, credible and constructive.

1. Begin with addressing employee concerns

As AI becomes more visible in everyday work, employees are trying to make sense of what it means for their roles, skills and future. Their questions are reasonable. Many are shaped by headlines, hype cycles and alarmist narratives rather than by how AI systems actually work today. Leaders have a critical role to play in steadying the conversation

1.1 Common misconceptions about AI

1. “AI thinks like a human – or soon will”

Because modern AI can sound fluent and confident, people often assume it understands, reasons or feels in human-like ways. It does not. AI systems predict patterns based on data; they do not think or comprehend in a human sense.¹,² This misconception fuels both fear (“AI will outsmart us”) and misplaced trust (“the system knows best”).

2. “AI is smarter than humans and objectively correct”

Research consistently shows that people overestimate AI’s intelligence and neutrality.³ Algorithms are seen as more accurate or less biased than humans, even though they inherit the limitations and biases of their training data.³ This result is a double risk: fear of being outperformed, and blind reliance on automated outputs.

3. “AI is basically just Chat GPT”

Many employees reduce the entire field of AI to the generative AI tools they use most.4 This narrow view confuses capabilities, risks and appropriate use. In reality, AI is a family of technologies ranging from basic automation and search to predictive analytics and machine learning, rather than a single chatbot.

4. “AI makes workplaces and collaboration unnecessary”

Some employees worry that AI will push work towards remote, automated and efficiency-only models, eroding collaboration and community. Research shows this fear is misplaced.5,6 Human collaboration, emotional exchange and social learning remain essential, and cannot be replicated by AI.

5. “AI will replace my job”

Fear of job loss is one of the strongest emotional responses to AI. While some tasks are automatable, full job replacement is currently uncommon.7 Displacement risk varies widely by task type, skill level and industry, and is often overstated in public debate.8 AI tends to reshape jobs far more than it eliminates them – but without clear communication, employees fill the gaps with worst-case assumptions.

1.2 Addressing fear of job displacement

Anxiety about job loss is rational and deeply emotional. It increases when employees feel excluded from AI decisions, lack visibility into organizational plans or do not understand how AI works.4,7

Three principles help leaders address this proactively:

- Be explicit about intent. Silence creates space for fear. Employees need to hear how AI will support, not sideline, human expertise.

- Shift the narrative from replacement to redesign. Evidence suggests that tasks – not whole jobs – are most affected by AI.8 Talking about redesigned workflows and human strengths builds confidence.

- Invest in skills, literacy and ethical guardrails. AI literacy gives employees a sense of control.4 Clear ethical and governance practices reinforce that AI will be used responsibly.

When leaders acknowledge employee concerns, address them with evidence and actively involve people in shaping change, adoption is more likely to succeed.

2. Be clear about how AI supports productivity

Abstract promises about transformation rarely reassure employees. What helps is showing, in practical terms, how AI can reduce friction, support better decisions and free people to focus on meaningful work.

2.1 Practical applications in daily work

Across industries, AI is reshaping routine tasks and augmenting knowledge work in tangible ways.

Task automation

AI can speed up repetitive or time-consuming tasks such as summarizing documents, producing first-draft content, organizing research and preparing data. McKinsey estimates that corporate AI use cases could unlock up to $4.4 trillion in productivity gains.9

Communication and content creation

Generative AI is widely used for drafting emails and reports, preparing for meetings and supporting performance reviews. Surveys of US workers show that nearly a third of generative AI users now spend an hour or more each day collaborating with these tools, mainly for writing, summarizing and editing.10

Problem-solving and decision support

AI can help interpret data, identify patterns and generate options. It is especially useful when employees are under time pressure or dealing with complex information.

Quality improvement and time creation

Studies show that people often complete certain tasks faster and with higher quality when using generative AI, especially for drafting and customer responses. At the same time, motivation can drop if AI is removed, reshaping how people perceive the value of their work.11 This reinforces the need for thoughtful task design that keeps human judgement and motivation at the core.

2.2 Practical examples from the field

Real-world examples help make AI feel tangible and human-centred.

Instant multilingual communication

A large European electronics retailer integrated DeepL translation directly into VivaEngage, enabling frontline staff to read or listen to internal messages in their preferred language.12 What once required orchestrated translation cycles and specialist support now happens instantly, improving access to information for a distributed workforce.

A conversational AI avatar for employee support

REWE, a German supermarket chain, piloted a hologram-based AI avatar modelled on the company’s Managing Director.13 Called GoRobert, the avatar provides conversational support to new employees, both in-person and via Microsoft Teams. The initiative shows how AI can humanize support rather than replace it.

Responsible experimentation in creative work

At Hearst Networks, AI adoption is driven by creativity and purpose as much as efficiency.14 One example is the world’s first fully AI-generated historical reenactment documentary, broadcast on Sky History and nominated for an innovation award. The project demonstrated that AI can be a creative partner rather than a threat to creative roles.

3. Encourage effective human-AI collaboration

As organizations move beyond experimentation, the challenge shifts from using AI tools to working well with them. Human–AI collaboration is becoming a core capability for modern teams. For example, McKinsey’s global Managing Partner recently announced that its global workforce currently consists of 40,000 humans and 20,000 agents. And within the next 18 months, he predicts that every McKinsey employee will be enabled by one or more agents.15 This is as much a cultural shift as a technical one. People collaborate effectively with AI when they understand its strengths and limits, trust it appropriately and feel safe to question its usage and outputs.

3.1 Build a collaborative mindset

Treat AI as a colleague, not an authority

Many employees are starting to use AI as a thinking partner rather than just a tool. In effective teams, AI supports reflection, sense-making and scenario exploration, while humans retain responsibility for context, judgement and creativity.

Clarify how work is delegated

Collaboration improves when three things are clear: who leads, how interaction happens and how improvement occurs over time.16 Employees need to know when humans are in control, when AI can act independently and how feedback loops work.

Carefully calibrate trust

Trust in AI develops through experience, not promises. It is shaped by context, usability and past interactions.17 The goal is not ‘maximum trust’, but appropriate trust. Too much leads to blind reliance; too little leads to underuse. Leaders should be transparent about limitations and encourage healthy scepticism.

Know when to combine insights

In some contexts, combining independent human and AI judgements outperforms one alone.18 This is especially useful in forecasting and complex decision-making, where diversity of perspective improves outcomes.

Keep humans in charge of meaning and creativity

In creative and exploratory work, humans need to stay firmly in the lead. AI can support discovery and analysis, but if it dominates too early, it can narrow thinking and reduce serendipity.19

3.2 Train for collaboration, not just tool use

Training should be designed as a holistic capability system, rather than a course catalogue.

At a minimum, employees need to learn:

- When to let AI lead, when humans should lead and when work should be shared.

- How to interpret AI suggestions and ask better questions.

- How to provide feedback that improves system performance.

Trust-building should also be taught explicitly. Small, safe‑to‑fail experiments help teams learn how to evaluate AI outputs and balance scepticism with openness.

This individual learning needs organizational backing: supportive leaders, clear norms for resolving conflicts between human and AI input, and ongoing transparency about how systems are monitored and improved.

4. Making ethics and transparency visible

Ethical AI requires robust policies as well as strong communication. Employees judge AI initiatives less by what is written in documents and more by how transparent, fair and accountable those initiatives feel in everyday practice.

Responsible AI implementation starts with clear principles (commonly fairness, transparency, accountability, privacy and security) and with practical controls over how AI tools are selected, configured and used in day-to-day work. For leaders, the challenge is making these principles visible and meaningful to employees, rather than abstract or legalistic.

Research shows that transparency in AI decision-making (for example, explaining how an algorithm works or why it produced a given recommendation) tends to increase perceived effectiveness and overall trust in AI systems.20 Transparency also shapes how employees interpret AI: when people understand how a system works and how well it performs, they are more likely to see it as a helpful tool rather than a threat.20

At the same time, transparency is not a silver bullet. For some employees, increased visibility into AI logic can raise discomfort or anxiety, particularly if decisions feel complex or consequential. This transparency must be paired with guidance, dialogue and psychological safety so people can question AI decisions without fear.

For digital workplace teams, practical transparency measures include:

- Providing clear descriptions of each AI tool’s purpose, data sources and limitations in user-facing documentation and onboarding.

- Offering explanations for key AI-driven decisions, such as recommendations, classifications or risk scores, particularly in HR, performance or compliance contexts.

- Communicating performance metrics, known limitations and monitoring results so users see how AI is evaluated and improved over time.

When ethics and transparency are treated as everyday communication responsibilities, rather than compliance exercises, trust in AI-enabled work increases significantly.

5. Keep the conversation open

A culture of open communication is essential to ethical and effective AI adoption, because it determines whether employees feel able to question, challenge and improve AI-enabled ways of working. Communication and feedback initiatives should be designed into AI initiatives at the outset, rather than after tools are live.

5.1 Build feeback loops into everyday work

Structured mechanisms, such as regular pulse surveys, retrospectives and suggestion channels, give employees opportunities to share their experiences. These mechanisms are particularly important in hybrid and distributed workplaces, where informal signals are easier to miss.

Practical design choices include:

- Scheduling regular pulse surveys or check-ins following AI rollouts (e.g. after introducing copilots or analytics features), with clear expectations about how feedback will be used.

- Integrating feedback collection into existing platforms (chat, intranet, HR portals) so commenting on AI-enabled processes is as easy as reporting an IT issue.

- Using analytics to aggregate and visualize feedback at scale, then sharing high-level findings and next steps with employees to reinforce that speaking up leads to action.

Over time, these loops normalize discussion about AI’s impact on work as part of everyday practice rather than an exception reserved for crises.

5.2 Close the loop – consistently

Closing the feedback loop is vital to sustaining trust and engagement. When organizations do not respond, or appear defensive, employees quickly disengage from feedback channels, undermining the very mechanisms intended to surface risks and opportunities.

Leaders can strengthen the loop by:

- Setting clear ownership and response times for AI-related feedback, including who is responsible for review and follow-up.

- Grouping suggestions and concerns (e.g. usability, fairness, workload, wellbeing) and communicating which areas are being prioritized and why.

- Sharing concrete examples where employee input led to changes, reinforcing that critical feedback is valued.

- Maintaining anonymous reporting options for sensitive topics, especially where power dynamics or fear of retaliation could silence important signals.

By consistently listening and responding to employees’ experiences with AI, organizations not only reduce risk but also co-create more trusted and effective AI-enabled workplaces.

Read our related article, Understanding AI readiness: How to prepare your digital workplace.

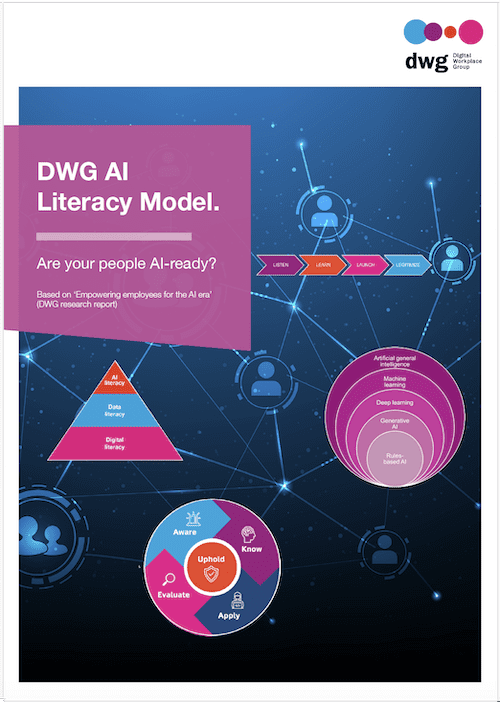

Related downloads

(DWG members can download full versions of all research via the member extranet)

Read more about Artificial intelligence and automation in our Knowledge Hub.

References

1 A comprehensive review of AI myths and misconceptions (Frank G Nussbaum).

2 15 biggest myths about artificial intelligence (ScienceNewsToday).

3 Myths, mis‑ and preconceptions of artificial intelligence (A Bewerdsdoff et al., Computers & Education: Artificial Intelligence).

4 Debunking 5 artificial intelligence myths (Minnesota Carlson School).

5 New study on which workers are losing jobs to AI (Megan Cerullo, CBS News).

6 MIT Iceberg Index on AI displacement (MacKenzie Sigalos, CNBC).

7 When usefulness fuels fear: The paradox of generative AI dependence and the mitigating role of AI literacy (Jiahui Liu, International Journal of Human–Computer Interaction).

8 Ten myths about AI in education (Louie Giray, HLRC).

9 Superagency in the workplace: Empowering people to unlock AI’s full potential (McKinsey).

10 The impact of generative AI on work productivity (Alexander Bick et al., Federal Reserve Bank of St Louis).

11 Research: Gen AI makes people more productive – and less motivated (Yukun Liu et al., Harvard Business Review).

12 Internal communications in the age of AI (Digital Workplace Impact podcast, Episode 153).

13 REWE digital puts employee support avatars to the test (REWE).

14 Hearst Network’s approach to building change agility with purpose (Digital Workplace Impact podcast, Episode 157).

15 Where McKinsey – and consulting – go from here (HBR IdeaCast podcast, episode 1060).

16 Deconstructing human–AI collaboration: Agency, interaction, and adaptation (Steffan Holter & Mennatallah El‑Assady, Computer Graphics Forum).

17 Collaborative human–AI trust (CHAI‑T): A process framework for trust in human–AI collaboration (Melanie J McGrath et al., Cornell University, arXiv).

18 Consolidating human–AI collaboration research in organizations: A literature review (Ying Liu & Lei Shen, Journal of Computer, Signal, and System Research).

19 AI in design idea development: A workshop on creativity and human–AI collaboration (Fabio A Figoli et al., Design Research Library Biennal Conference Series).

20 Artificial intelligence decision-making transparency and employees’ trust in AI (Liangru Yu & Yi Li, Behavioral Sciences).

Categorised in: → Internal communications, Artificial intelligence and automation