Designing AI literacy programmes that stick: Empowering future innovators

Most organizations accept that their people need ‘AI skills’. But too many AI literacy programmes stop at basic guidance and prompt tips. If AI is going to support quality work rather than just create ‘noise’, employees need something deeper: critical thinking, sound judgement and a working understanding of how these systems actually produce their outputs.

What do we mean by AI literacy?

AI literacy is more than knowing how to use a specific tool. It’s the mix of knowledge and skills that helps someone to recognize where AI is in play, make sense of what it’s doing, and decide how, or indeed whether, to use its results. For digital workplace, HR and L&D leaders, this is a helpful test: if a programme only teaches people how to prompt, it probably isn’t building true AI literacy.

One useful framework defines three dimensions of AI literacy: cognitive, affective and sociocultural.[1] The cognitive piece covers the basics: concepts like data, models and training, and the ability to apply them when looking at AI‑generated content or decisions. The affective dimension is about how people feel (confidence, anxiety, trust) and whether they feel able to question AI rather than either dodge it or defer to it. The sociocultural element brings in ethics and power: ‘Who is affected by AI?’ ‘Whose data is being used?’ and ‘What does ‘fairness’ really look like in a specific context?’.

Why AI literacy is now on the leadership agenda

AI is pervasive across all business functions, from hiring and performance management, to customer service, content creation and more – often spreading faster than governance can keep up. AI literacy is now a leadership responsibility: without it, organizations run risks of misuse, poor decisions and pockets of underground experimentation.[2] When employees don’t understand how AI works or what it can and can’t do, they’re more likely to either ignore it completely or trust it far too much.

Policy signals point in the same direction. The U.S. Department of Labor (DOL)’s AI Literacy Framework[3] treats AI literacy as a foundational worker capability and sets out core content areas to support national training efforts. It stresses that workers should understand where AI shows up in employment practices, how automated systems affect them, and what rights and responsibilities they have in AI‑mediated workplaces. In Europe, the EU AI Act[4] underlines the importance of employee training, transparency and human oversight, especially around higher‑risk use cases. Together, these create a strong nudge for organizations to take AI literacy seriously as part of risk, compliance and culture.

AI tends to automate repetitive and routine tasks and to shift human work towards more complex problem‑solving, collaboration and oversight. AI adoption is reshaping skills profiles across industries and increasing the importance of higher‑order cognitive and social skills. This only works if people are equipped to play those new roles; without AI literacy, employees are being asked to supervise systems they don’t really understand.

What goes into an effective AI literacy programme?

Rather than vague awareness sessions, effective programmes are clear about the specific competencies they’re trying to build – for example, “Interpret AI‑generated outputs and identify when human review is needed” – and then design learning activities around those. They use learner‑centred, interactive methods rather than long lectures, and connect concepts directly to real situations where learners encounter AI.

In practice, that might mean:

- explaining and visualizing how a model is trained and why data quality matters

- creating space for people to talk about their hopes and fears about AI and how it’s landing in their work

- using examples to explore how AI‑mediated decisions can impact groups differently, and where fairness and accountability come in.

Effective AI literacy programmes are designed with specific roles in mind, where competencies are matched to job families and roles. This means distinguishing, for example, between baseline AI awareness for all staff, deeper operational skills for those who use AI every day, and stronger emphasis on oversight, ethics and risk for managers and leaders. This role‑based view helps avoid the pattern where some groups feel overwhelmed while others find the content too basic.

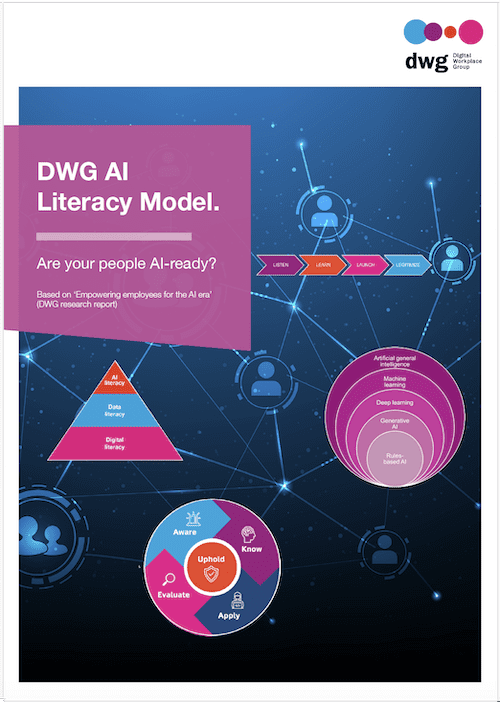

DWG’s AI Literacy Model describes five complementary dimensions that help employees progress from basic awareness to responsible, effective use of AI at work: AWARE (awareness and acceptance of AI capabilities); KNOW (understanding how to use AI at work); APPLY (using AI to augment tasks and projects); EVALUATE (critically evaluating validity and reliability); and UPHOLD (using AI responsibly). Use this as a shared language to align learning pathways, governance and hands‑on practice across roles.

Make it hands‑on, social and a bit playful

People learn AI best by doing. There is great value in designing interactive activities – from simple exercises that illustrate how models learn from data, through to sandbox environments where people can experiment with AI tools on realistic tasks. When learners can tweak inputs and see outputs change, concepts like bias, overfitting or data drift stop being abstract jargon and start to feel tangible.

An effective approach is to use real tasks – for example, drafting a policy summary or building a customer response – and then testing how AI handles them, where it helps and where it breaks. The learning comes not just from the tool, but from the conversation afterwards: ‘When should they trust the output?’ ‘When should they override it?’ and ‘What checks feel appropriate?’.

Collaborative learning also matters. Group discussions can surface different perspectives and concerns about AI, which helps people to feel less alone and more able to challenge assumptions. In a digital workplace context, bringing HR, IT, legal and business teams together around specific AI use cases helps them to align on what ‘responsible use’ looks like in practice. That shared language is often as valuable as the specific skills.

AI literacy is not an add-on

Most organizations already have some kind of digital skills or digital literacy offer. The question is how to evolve this so that AI becomes a natural part of it, rather than an add‑on. Many frameworks suggest treating AI literacy as a layer that sits on top of existing basics like information evaluation, privacy, security and online collaboration.

In practice, that might mean updating existing modules to:

- show where AI is already active in familiar tools (search, recommendations, summarization)

- help people spot AI‑generated content and evaluate it with a critical eye

- connect AI to existing conversations about data protection, risk and digital wellbeing.

The tools you use to teach AI literacy also make a difference. Interactive demonstrations, low‑stakes experimentation and simple visual explanations can help people to build accurate mental models of AI. The training should not be too product specific: durable AI literacy rests on transferable concepts, such as understanding that AI systems are essentially pattern‑spotters working from data and probabilities, rather than knowing every capability of a particular app.

AI skills for the future

In future, a core set of skills will matter almost everywhere. These include: data literacy (understanding where data comes from, how it might be skewed and what that means); algorithmic thinking (reasoning about inputs and outputs); and the ability to interpret and question AI‑generated content. These skills will be complemented by strong communication, collaboration and ethical reasoning – especially as more work is done in human–AI teams.

As AI takes over repetitive tasks, humans are increasingly asked to frame problems, choose tools, monitor performance and step in when things go off track. That calls for strong critical thinking and judgement. Good AI literacy programmes lean into this by explicitly teaching people to ask questions like: ‘What data might be missing here?’, ‘What assumptions is this model making?’ and ‘What happens if this is wrong?’.

Given how quickly AI and regulation are changing, curriculum design needs to be modular and easy to update. This should include a mix of core modules for everyone (fundamentals, ethics, human–AI collaboration) plus role‑specific pathways (for example, AI for people managers or AI in operations). For organizations, a modular approach makes it easier to iterate without constantly starting from scratch as tools and policies evolve.

Getting practical: AI literacy workshops that work

Digital workplace workshops provide a productive format for AI literacy education, particularly for busy leaders and managers. A simple pattern works well: start with clear learning goals, ground the session in real use cases and blend short inputs with plenty of interaction. A useful rhythm is ‘explain – experience – reflect – apply’: share a concept, let people try it, debrief together and then ask them to map it to their own context.[5,6]

Engaging techniques include live demos where you show an AI tool doing something useful, then pause to unpack what’s really happening under the hood and where it might go wrong. Scenario‑based discussions also work well: ‘The AI suggests rejecting this candidate – what do you do?’ or ‘The chatbot produced this answer – is it good enough to send?’. Explicitly surfacing common myths like ‘AI is objective’ or ‘AI understands just like a human’ and then challenging them provides added value to these sessions.

Measuring whether workshops are actually making a difference is important if you want programmes to stick. This can be done through a mix of quick before‑and‑after checks, confidence ratings and observation of how people behave in the weeks after training. Some organizations use short scenario‑based assessments where people respond to AI‑related situations pre‑ and post‑training; changes in how they reason through the scenario can be revealing.

Build community, not just courses

AI literacy should be viewed as a social, rather than just an individual, journey. People make sense of AI together, by talking about what they’re seeing, sharing tips and comparing experiences. Communities of Practice, discussion channels and cross‑functional meet‑ups can all help keep the conversation going between formal sessions.

External partners can also play a useful role. Linking your internal efforts to sector‑wide initiatives, higher‑education partners or public AI literacy projects can bring in fresh materials and perspectives, and help you stay aligned with frameworks like the US DOL AI Literacy Framework and emerging expectations around the EU AI Act.[3,4] For smaller organizations, tapping into these wider ecosystems can make the difference between a one‑off push and a sustained effort.

Community‑based approaches are also important for inclusion. Studies point out that people’s experiences of AI vary widely depending on their role, background and comfort with technology.[7,8] Creating spaces where colleagues can ask basic questions without embarrassment, share concerns and raise ethical dilemmas helps support the emotional and social sides of AI literacy.

Ethics, critical thinking and ‘how AI actually works’

Ethics is central to meaningful AI literacy. People need the tools to think through issues like bias, fairness, transparency and accountability in concrete situations. Done well, these sessions move beyond abstract ‘AI ethics’ into practical questions like ‘Who is responsible for this outcome?’ and ‘What would fairness look like here?’.

Critical evaluation should be a core outcome of AI literacy education: teaching people to ask who built the system, what data it was trained on, whose interests it serves and how problems are handled. That simple habit of pausing to ask ‘Should we use AI here?’ is one of the clearest markers of real AI literacy.

A big part of this is helping people to understand, at a conceptual level, how AI produces outputs. When people think of AI as a sort of all‑knowing brain, they are more likely to over‑trust it; when they see it as a pattern‑recognition system working from past data, they are more likely to use appropriate human judgement.[1,5,9] Helping people to build accurate mental models – for example, that generative AI is predicting the next likely word based on training data rather than ‘thinking’ or ‘knowing’ – can transform how they use it.

For digital workplace, HR and L&D teams, this is where AI literacy really goes beyond tool training. AI literacy programmes that stick help people to understand enough about how AI works, enough about where it can fail, and enough about ethics and context to enable them to make good calls day to day – even as the tools themselves continue to change.

Conclusion: AI literacy programmes that stick

A sustainable AI literacy programme is less about a single ‘big bang’ course and more about building a rhythm and cadence of ongoing education. To keep engagement and interest high, organizations must weave AI literacy into existing learning channels, leadership conversations and day‑to‑day work, so that it feels like an ongoing capability rather than a time‑boxed project.

One practical strategy is to shift from occasional, heavy interventions to lighter, more frequent touchpoints: short refreshers linked to new use cases, ‘show and tell’ sessions where teams share experiments, and micro‑learning nudges that respond to changes in tools or regulation. Programmes should be designed for inclusivity from the start: offer content in multiple formats, support different learning needs and ensure that every role, location and community can participate, not just the most digitally confident.

Obtaining continuous feedback is critical if these programmes are going to improve rather than decay. This means treating AI literacy itself as a learning system: using surveys, quick pulse checks, workshop debriefs and real‑world observations to understand what’s working, what’s confusing and where people still feel exposed. Those insights should then feed into regular iterations of content, formats and support, so the programme stays aligned with how AI is actually being used in the organization.

Ultimately, sustaining AI literacy is about culture as much as curriculum. The goal is to normalize curiosity about AI, to reward thoughtful questioning and to position ongoing learning as part of everyone’s job. If leaders keep signaling that AI is here to augment human work (and that learning how to use it wisely is a long‑term investment), organizations stand a much better chance of growing a workforce that partners with AI confidently, critically and ethically over time.

Related downloads

(DWG members can download full versions of all research via the member extranet)

Ask yourself…

As a digital workplace leader, can you answer the following questions about the state of your workforce’s digital literacy with confidence? If not, let DWG help you create a clear and specific strategy and roadmap for driving up your organization’s Digital IQ.

- How does our AI strategy and roadmap measurably support the organization’s strategic goals, and what workforce outcomes are we targeting?

- Which 3–5 high-volume or high-friction workforce tasks are our best candidates for AI-driven efficiency gains right now, and why?

- Where are teams already using AI in day-to-day work, and what evidence do we have that it is improving productivity or quality?

- How confident is our workforce in using AI-enabled tools, and what training, support, and enablement gaps are most limiting adoption?

- What legal, regulatory, and policy requirements govern our workforce use of AI (including data privacy and IP), and how are we ensuring compliance?

- Do we have clear, communicated guidelines for when AI can be used in workplace decisions, and how do we enforce transparency and human oversight?

- Who is accountable for AI-driven decisions and outcomes across the workforce (owners, escalation paths, audits), and how is that accountability operationalized?

Download these questions as part of a free AI workforce assessment template

Read more about Artificial intelligence and automation in our Knowledge Hub.

References

[1] A conceptual framework for designing artificial intelligence literacy programmes for educated citizens (Conference Proceedings of the 25th Global Chinese Conference on Computers in Education – GCCCE 2021).

[2] AI is now a leadership mandate (Fast Company).

[3] The U.S. Department of Labor’s Artificial Intelligence Literacy Framework (U.S. Department of Labor).

[4] The EU AI Act and AI literacy: Why every employee needs training now (Techclass).

[5] What is AI literacy? competencies and design considerations (CHI Conference Paper, 2020).

[6] Designing an AI literacy program (IAPP).

[7] AI literacy and workforce transformation (chap 7 in: Hemalatha Arunachalam. AI in business analytics and decision-making).

[8] Universal design as the foundation of AI literacy: Preparing the workforce of tomorrow (Workforce GPS).

[9] AI literacy in the workforce (Digital Promise).

Categorised in: → Training and learning, Artificial intelligence and automation, Blog