7 essential steps for building an AI‑ready skills strategy in your organization

AI-ready skills are fast becoming a strategic necessity for enterprises, not a discretionary investment.

Understanding AI readiness

As organizations accelerate the adoption of generative and agentic AI, many are discovering that access to powerful tools does not automatically translate into meaningful business value. Pilots stall, anxiety rises and adoption plateaus when workforce readiness, leadership alignment and cultural capability are not prioritized.

Much of the current conversation on AI readiness focuses on technology, infrastructure or individual tools. However, evidence from across industries suggests that the limiting factor is rarely the accessibility of the technology, but whether people across the organization can confidently, responsibly and productively integrate AI into their everyday work.

From a digital workplace and workforce capability perspective, AI readiness should be understood as an organizational capability – one that sits at the intersection of skills, culture, governance and learning, rather than as a narrow training or change initiative.

The same challenges are widely observed across global enterprises: widening skills gaps, uneven adoption, rising technostress and uncertainty about accountability as AI systems become more autonomous.

Why it matters

Understanding AI readiness matters because AI is already reshaping how work is structured, valued and performed across the enterprise.

Readiness, in this context, is the bridge between technological potential and workforce transformation. Without the human skills, confidence and governance to work effectively alongside AI, organizations struggle to move beyond isolated use cases. To understand what an AI-ready skills strategy must enable, it is necessary to examine how AI is changing work itself – at the level of tasks, roles and responsibilities.

The role of AI in workforce transformation

While entire roles are rarely automated away, their nature is significantly changing, with task composition, required skills and risk profiles all in flux.[1]

At the task level, AI is shifting work from manual and routine cognitive tasks to higher-value activities. For example, junior accountants may now ‘oversee’ AI-driven audits, rather than perform repetitive data entry. Frontline staff are encouraged to use AI for record-keeping and scheduling to free up time for human-to-human interaction.[2]

At the same time, human roles are evolving from ‘doer’ to ‘orchestrator’. With around 57% of UK businesses expecting to adopt agentic AI (autonomous systems that can pursue goals independently) within the next three years, workers are transitioning from being ‘prompting assistants’ to managing autonomous digital partners.[3]

Yet, despite widespread recognition of the issue, the gap between awareness and action remains stark. While 97% of UK firms report an AI skills gap, only 11% have undertaken formal AI training in the last year.[4] This startling finding reveals a large gap-to-action ratio that skills strategies must address.

Here we outline seven practical steps for building an AI-ready skills strategy. These steps provide a structured approach to thinking about how organizations can move from awareness of AI’s potential to sustained, responsible capability at scale.

Step 1: Assess current skill gaps

Analysing existing competencies

The first step in designing an AI-ready skills strategy is understanding existing competencies across the entire skills spectrum, not just technical ones. AI preparedness depends heavily on non-technical capabilities such as critical thinking, data literacy, ethical judgement, collaboration and change resilience.[1] This means mapping current strengths and weaknesses in both hard and soft skills, rather than assuming that only data scientists or engineers are affected by AI adoption.

While the introduction of new technology often causes a temporary drop in efficiency, it typically forces an upgrading effect, pushing employees towards more complex, analytical work rather than routine tasks. This shift needs to be actively managed to avoid a permanent loss of skills or confidence; as one analysis notes,“We need new ‘metacognitive skills’ to evaluate AI outputs – but that’s precisely what novices in the danger zone lack. You can’t develop judgment about what you don’t know.”[5]

Identifying AI readiness skills needed

Skill needs and requirements differ by industry and role, but a common theme is a gap in understanding AI concepts, capabilities and limitations.[6]

This often shows up as informal or experimental use of AI tools without clear guidance on quality, risk or accountability. In parallel, there is pressure for leaders and frontline staff to develop the ability to recognize bias, safeguard privacy and know when to escalate issues.

The World Economic Forum highlights “durable skills” – including creativity, problem-solving and self-management – as the hard currency of the labour market in an AI-mediated future.[7] In practice, this means focusing on critical thinking, systems thinking and ethical oversight, rather than investing in ‘how-to’ tips on the latest application.

These non-technical skills are portable and can be applied across sectors, strengthening mobility, interdisciplinary collaboration and resilience in career pathways. Prioritizing transferable competencies (e.g. critical thinking, digital collaboration and creativity) also helps organizations to reduce duplication of training and support internal talent mobility.[6]

Step 2: Develop a strategic workforce planning framework

Aligning workforce goals with business objectives

Strategic workforce planning is a forward-looking process whereby organizations consider future needs based on strategy and plan for the number of people and the skills required to achieve short- and medium-term goals.

The obvious place to begin is with the strategic goals of the business. Workforce requirements will depend on what the business wants to achieve, both in terms of AI-enabled products and services, and internal productivity or experience gains. This includes connecting individual roles with organizational objectives, and ensuring employees understand how their work contributes to the company’s strategy.

Incorporating AI into strategic planning

The biggest change to strategic workforce planning is how to incorporate AI into the process. Businesses acknowledge that AI is changing not only the way people work, but the very nature of job roles themselves.

According to McKinsey, up to 30% of hours worked today could be replaced through automation over the next four years, although the impact will vary by sector, occupation and country.[7]

Planning, therefore, must account for ‘digital coworkers’ that act without constant human prompts, including the shift from prompt-based generative assistants to more autonomous decision-support agents.[8] Traditional hiring is now augmented by automation of tasks and the use of freelance experts as organizations seek to close emerging AI skills gaps. At the same time, questions of accountability and governance must be embedded into workforce planning: when an AI agent makes a mistake, leaders need clear lines of human accountability and human-in-the-loop controls that satisfy evolving UK and EU regulatory standards.

Step 3: Create comprehensive competency frameworks

Initially, organizations may experience a lag in aggregate gains as the workforce spends time learning new systems before exponential growth occurs.[9] This feeling of incompetence can cause anxiety, decreased morale and fear of appearing incapable. To mitigate this, organizations are increasingly focusing on reskilling their employees through targeted training and support. A robust competency framework helps by clearly articulating expectations.

Mapping skills to roles and functions

Mapping skills to roles and functions helps set out the capabilities required for each job family, making it easier to align recruitment, performance and learning. This mapping typically involves analysing job responsibilities, tasks, their frequency and complexity, and then defining skills requirements based on market research, existing job descriptions and insights from people currently in the roles. The resulting skills map feeds into the design of the competency framework, where competencies are categorized and proficiency levels defined.

Emerging taxonomies can support this work. For instance, a four-tier AI skills taxonomy that segments the workforce into ‘citizens’, ‘workers’, ‘professionals’ and ‘leaders’ provides a common language for defining literacy levels across roles. This allows HR and digital workplace teams to specify, for example, that all ‘citizens’ need baseline AI literacy, while ‘professionals’ and ‘leaders’ require deeper capabilities in evaluation, oversight and governance.[10]

Establishing AI literacy programmes

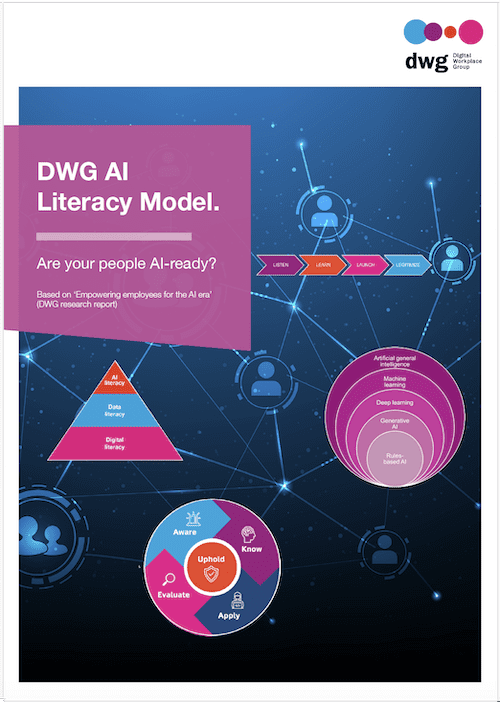

An effective way to ensure AI readiness among the workforce is to deliver a targeted programme of AI literacy aligned to those competency expectations. DWG’s Digital Workplace AI Literacy Model outlines five key dimensions of AI literacy[11]:

- AWARE: Awareness of AI capabilities and acceptance of them.

- KNOW: Understanding how to use AI at work.

- APPLY: Using AI to augment work tasks and projects.

- EVALUATE: Critically evaluating the validity and reliability of AI.

- UPHOLD: Using AI in a responsible manner.

Using this model, organizations can create targeted literacy programmes and specific training pathways to support the workforce in developing the capabilities to confidently engage with AI in the workplace. By investing in AI literacy, organizations not only reduce risk but also build a more adaptable workforce that can support the goals of the business as AI evolves.

Step 4: Design digital skills training programmes

Tailoring training to diverse learning preferences

Individuals have unique learning preferences which require a range of delivery methods to ensure everyone benefits, from self-paced e‑learning and short videos to workshops, coaching and peer learning communities. Strategies include using blended learning approaches (e.g. combining online and in-person learning) and creating learning content in multiple formats and media, such as videos, text and interactive simulations.

Research in learning science suggests that people retain and apply skills more effectively when learning is spaced, contextual and integrated into real work. For organizations, this means shifting investment away from one-off AI awareness sessions towards shorter, role-relevant learning moments supported by coaching, peer exchange and feedback in the flow of work.

Leveraging technology for training delivery

Utilizing technology for training delivery supports customizing learning to diverse preferences and specific roles. It offers flexible, engaging and personalized experiences, as well as the ability to scale learning programmes across locations and provide interactive experiences and simulations.

The introduction of AI into learning tools has made it more accessible and affordable to create high-quality training. For example, text-to-speech AI video tools allow subject-matter experts to generate professional training videos quickly, or to repurpose a policy document into multiple content formats.

Many organizations are also using AI-enabled learning platforms to recommend relevant content, generate practice questions or summarize long-form resources into digestible pieces. Used thoughtfully, these tools can make corporate training more interesting, adaptable and effective, while modelling responsible AI use in a low-risk context.[11]

Step 5: Foster a continuous learning culture

The solution to AI disruption is not abandoning AI but recognizing that what we often label ‘inefficiency’ – time spent experimenting, making mistakes and reflecting – is in fact the foundation of competence. The struggle is the learning. To stay ahead, organizations need to cultivate cultures where experimentation, feedback and curiosity are normal parts of everyday work rather than rare events.

Encouraging lifelong learning and adaptability

In an era of rapid change, one way to stay relevant is to keep learning and adapting. Learning should be integrated into business as usual, supported by time, recognition and leadership behaviours that nurture skill-building and knowledge-sharing.

By investing in tailored learning paths, organizations empower their employees to develop their capabilities and keep up with change. Studies on workforce adaptability highlight the importance of self-efficacy – i.e. the belief and confidence in one’s own capability – in periods of technological change. In practice, organizations that make time for experimentation, encourage reflection and questions, and visibly reward learning behaviours are better able to sustain AI adoption without driving burnout.

Maintaining ‘learning ladders’ is also vital. Companies must resist the false economy of eliminating junior roles simply because AI can automate some tasks. TSMC, for example, expanded its apprentice programme despite high levels of automation because it understands that today’s junior employees are tomorrow’s experts who will know when the AI is wrong.[5] Without these developmental rungs, organizations risk creating a generation of workers who supervise systems they do not fully understand.

Incorporating feedback and adaptation mechanisms

Continuous learning needs to be balanced to avoid burnout and technostress. High AI adoption can be linked to increased technostress if not balanced by investment in manager capability, workload design and resilience training.[12] People need tools to independently clarify problems, identify resources and experiment with creative solutions, alongside psychological safety to surface issues early on.

The most important component of rapid learning is high-quality feedback. Organizations can embed feedback loops through regular retrospectives, performance conversations and forums where employees can share patterns, risks and emerging practices.

Step 6: Implement AI literacy programmes organization-wide

Building awareness and understanding of AI

Literacy programmes should go beyond tool demonstrations to include explainability – how to audit an AI’s decision-making process and understand its limitations. Without this, organizations risk either underutilization (because people do not trust the system) or blind over-reliance (because they trust it too much).[13]

Structured curricula anchored in models like DWG’s AI Literacy Model can help ensure consistency while still allowing tailoring by function and seniority. For example, all staff might complete foundational modules on AWARE and KNOW, while managers and specialists also develop EVALUATE and UPHOLD capabilities linked to governance frameworks, risk management and regulatory requirements.

Providing resources and support for employees

Training courses alone rarely lead to sustained behaviour change; learning is a continuous process embedded in repetition and reinforcement. AI literacy should be supported by regular workshops, communities of practice, guidance and events that challenge and change behaviour. These programmes should track, measure and reward behaviour change, for example, through performance objectives, recognition of responsible AI use and stories that highlight learning from failure and experimentation.

Some organizations combine formal training with enablement mechanisms such as AI ‘clinics’, champions networks and sandboxes where employees can safely test tools on staging data. This combination of structured learning and ongoing support helps embed AI use as part of everyday work rather than a one-off initiative.

Step 7: Measure success and iterate

Key performance indicators for skills strategy

To understand whether an AI-ready skills strategy is effective, organizations should move beyond simple training completion rates. More meaningful indicators include time-to-value for AI initiatives, adoption and usage patterns, changes in error rates or quality, and measures of algorithmic trust (e.g. whether employees feel able to challenge AI outputs).[4]

Rather than stopping at satisfaction scores, organizations can measure changes in behaviour (e.g. how often teams use AI to support tasks, and how they escalate risks) and organizational results (e.g. reduced cycle times, improved customer experience or better decision quality). Linking AI skills interventions explicitly to these metrics helps sustain investment and focus over time.

Continuous improvement and evolution of the strategy

Finally, AI-ready skills strategies need to be treated as living systems. There will naturally be a disconnect between the pace of AI adoption and the workforce’s ability to use new tools, especially given the paradox where mastery of old methods can initially make skilled workers feel unskilled with new ones. As established routines are disrupted, even high performers may struggle with unfamiliar tools and workflows.

Regularly reviewing skills maps, competency frameworks, training content and governance policies enables organizations to respond to new use cases, regulations and risks. Engaging employees in this review through surveys, focus groups and communities of practice helps ensure that strategies remain grounded in day-to-day reality rather than in abstract plans. In this way, building an AI-ready skills strategy becomes an ongoing process of sensing, learning and adapting, rather than a one-off programme.

Ask yourself…

As a digital workplace leader, can you answer the following questions about the state of your workforce’s digital literacy with confidence? If not, let DWG help you create a clear and specific strategy and roadmap for driving up your organization’s Digital IQ.

- How does our AI strategy and roadmap measurably support the organization’s strategic goals, and what workforce outcomes are we targeting?

- Which 3–5 high-volume or high-friction workforce tasks are our best candidates for AI-driven efficiency gains right now, and why?

- Where are teams already using AI in day-to-day work, and what evidence do we have that it is improving productivity or quality?

- How confident is our workforce in using AI-enabled tools, and what training, support, and enablement gaps are most limiting adoption?

- What legal, regulatory, and policy requirements govern our workforce use of AI (including data privacy and IP), and how are we ensuring compliance?

- Do we have clear, communicated guidelines for when AI can be used in workplace decisions, and how do we enforce transparency and human oversight?

- Who is accountable for AI-driven decisions and outcomes across the workforce (owners, escalation paths, audits), and how is that accountability operationalized?

Download these questions as part of a free AI workforce assessment template

Related downloads

(DWG members can download full versions of all research via the member extranet)

Read more about Artificial intelligence and automation in our Knowledge Hub.

References

[1] Future of Jobs Report 2025 (World Economic Forum Insight Report).

[2] Government expands free AI training for 10m workers (Mirage News).

[3] AI Labour Market Survey 2025 report (UK Government – Department for Science, Innovation & Technology).

[4] AI Labour Market Survey 2025 (Gardiner & Theobald for the UK Department for Science, Innovation, and Technology).

[5] Is AI creating incompetent experts? (Kiron Ravindron, IE Insights).

[6] AI skills for the UK workforce: Executive summary with introduction and next steps (Skills England).

[7] The critical role of strategic workforce planning in the age of AI (McKinsey: People & Organizational Performance).

[8] AI grew up and got a job: Lessons from 2025 on agents and trust (Google Cloud: Blog).

[9] The impact of AI on the labour market (Tony Blair Institute for Global Change).

[10] AI skills for life and work: Rapid evidence review (UK Government – Department for Science, Innovation and Technology; Department for Culture, Media and Sport).

[11] Unlocking the AI opportunity: The AI literacy key (DWG: Expert Blog).

[12] Good Work Index 2025 (CIPD).

[13] Open education principles: Resisting the metrics of AI black boxes (Tel Amiel et al., UNESCO)

Categorised in: → Training and learning, Artificial intelligence and automation